dsatshc

Data Science team at SHC

We are a new team in Technology and Digital Solutions (TDS), announced in March 2022, to carry out Stanford Health Care’s long-term vision to harness artificial intelligence to support and enhance every aspect of health care delivery, AI research and medical education.

- If you are at SHC, or SOM, visit the Data Science Team's Intranet Site.

- If you are an applicant interested in joining the team, visit Careers at Stanford Healthcare.

- Current open positions are: ML Ops Engineer (AI/ML)

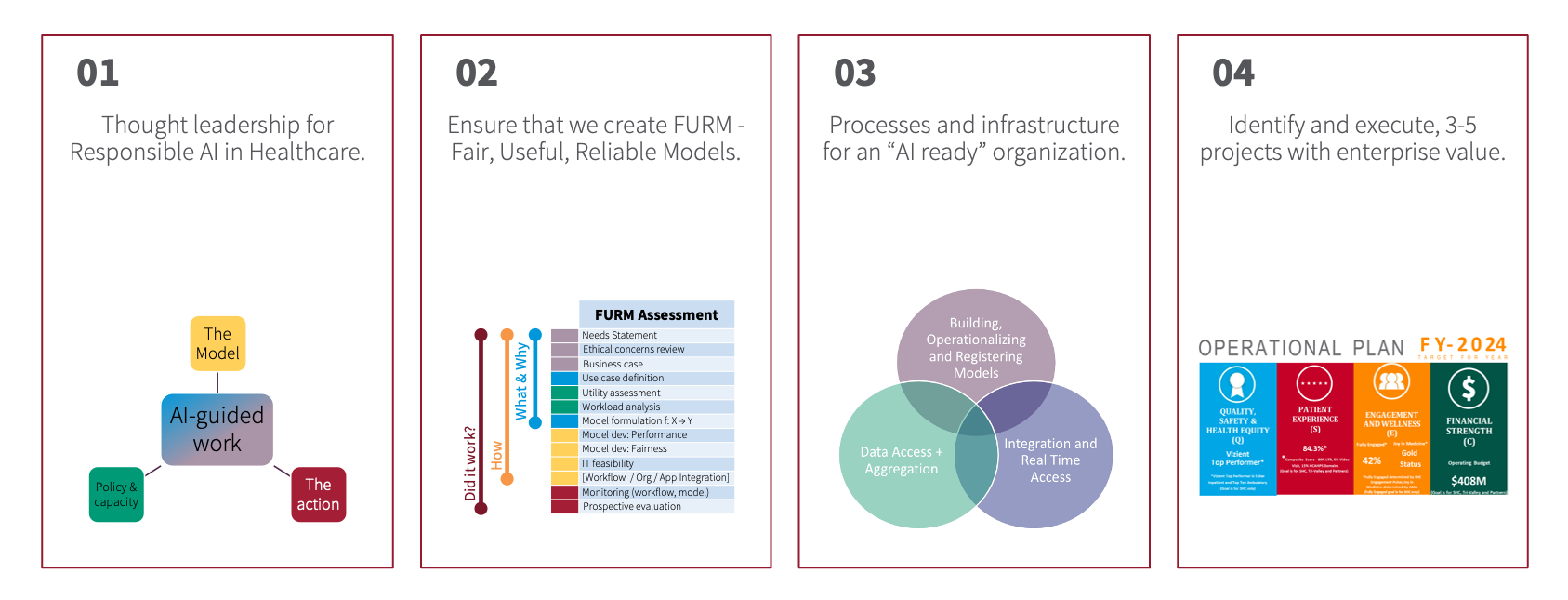

Our team's efforts center on how Stanford Health Care accelerates innovations around artificial intelligence — from development and implementation to maintenance and optimization. Our efforts span the four areas of activity outlined below.

dsatshc.txt · Last modified: 2023/11/15 10:21 by nikesh